Codex

by OpenAI

At a glance

Price

$8/mo

Free tier

Yes

Platform

Desktop app (macOS, Windows), CLI, IDE extension, Web

Best for

Cloud-delegated multi-agent tasking

Learning curve

Moderate

Last update

2026-03-22

Our take

Editorial verdict · We Did The Homework

Verdict

The broadest-surface coding agent available, with cloud task delegation and enterprise governance that no IDE plugin matches. It's most useful when you have a backlog of parallelizable tasks to delegate and a team large enough to need governance controls. Reliability variance is a real consideration before committing.

The platform

The name Codex referred to a language model OpenAI retired in 2022. What they shipped under the same name in 2025 is categorically different: a coding agent platform covering a desktop app, CLI, IDE extension, web interface, and cloud task delegation, all tied to a single OpenAI account. One account, four surfaces, async tasks that run while you're not at your keyboard. No competitor offers that breadth in a single subscription.

Cloud tasking

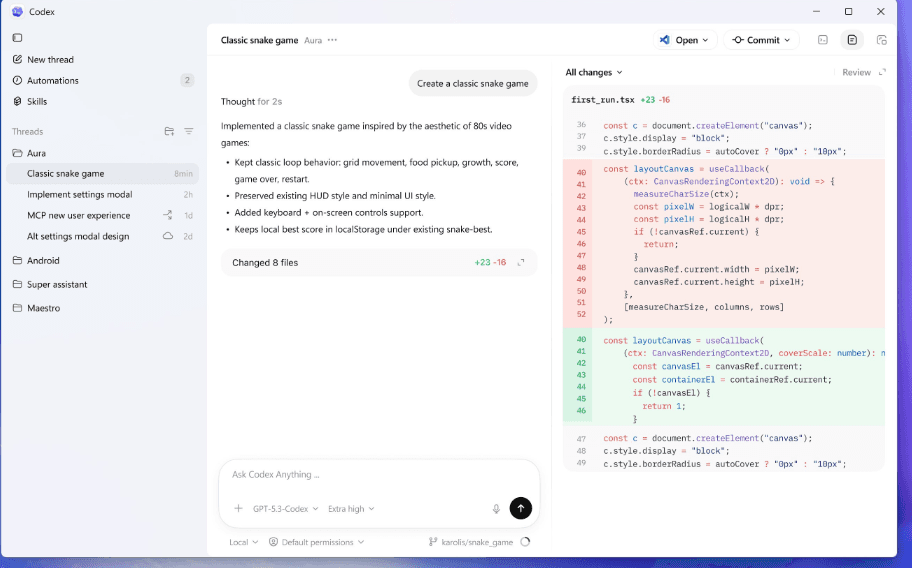

The cloud tasking model is the feature that doesn't exist elsewhere in the same form. Trigger a code review by tagging @Codex in a GitHub comment. Route a Linear ticket to an automated Codex task via the Automations feature. Schedule an unattended refactoring run overnight. We delegated a multi-file refactor as a cloud task and checked back an hour later. Waiting for us was a structured diff, ready for review. An IDE plugin can't do this.

Enterprise governance

The enterprise governance layer is the deepest in the category. RBAC, SAML, SCIM, data residency controls, compliance log export APIs, and enterprise key management. The AGENTS.md instruction system shapes agent behavior at the project, team, and org level. Admins can lock configurations via managed requirements.toml. For organizations where AI coding needs to operate under IT governance, no competitor has published controls this detailed.

The honest problems

The honest problems start with reliability. Community threads on GitHub and Hacker News document reconnection instability and latency regressions frequently enough to be a pattern, not isolated incidents. OpenAI has shipped fixes for specific regression windows, but a subset of users experienced repeated disconnects that interrupted mid-task execution. The pricing situation is also an embarrassment at OpenAI's scale: the Business plan appears at $25/user/month on one official page and $30/user/month on the Codex-specific pricing page.

Versus the field

Compared to Claude Code, Codex has broader surface coverage and stronger enterprise controls but trails on complex multi-file reasoning. Compared to Cursor, Codex lacks the IDE-native tab completion but wins on async automation and governance depth. The developer who gets the most from Codex isn't choosing between Codex and an IDE plugin. They're asking whether they want an agent they can fire at a backlog of tickets and trust it to return with reviewable results.

What stands out

Cloud Task Delegation

Trigger coding tasks asynchronously from GitHub comments, Slack messages, or Linear tickets using @Codex tagging or the Automations system. Tasks run in hosted cloud environments and return structured diffs — no local machine required. This is the defining capability that separates Codex from IDE-based competitors.

Multi-Surface Continuity

One OpenAI account spans the desktop app, CLI, IDE extension, and web. Context, task history, and configurations persist across all surfaces — start a task in the app, hand it off to a CLI session, or review the output on the web without re-authenticating or losing state.

AGENTS.md Instruction Layering

Project-level, team-level, and org-level instruction files that shape every agent interaction. Admins can lock certain configurations via managed requirements.toml in enterprise environments, enforcing coding standards and security policies across all Codex usage in the organization.

Worktrees for Parallel Tasking

Run multiple coding tasks in isolated git worktrees simultaneously within the same repository. The desktop app supports this natively, enabling parallel-branch development without context bleed between agents — useful for testing multiple approaches or handling multiple tickets at once.

Enterprise Governance Stack

RBAC, SAML SSO, SCIM provisioning, data residency controls, compliance log export APIs, and enterprise key management are all documented in the admin setup. The separately positioned Codex Security product adds vulnerability discovery and remediation over connected repositories.

Codex-Spark Low-Latency Preview

A research preview model running on Cerebras hardware that dramatically reduces time-to-first-token for real-time coding interactions. At 128k context and text-only at launch, it is not yet a full replacement for GPT-5.4, but it signals OpenAI's intent to compete with Cursor's snappy inline experience.

Pros & cons

Pros

Cons

Who it's for

Pricing

Free

$0

- Limited Codex usage via ChatGPT

- Basic app and web access

Go

$8/mo

- Entry-level ChatGPT plan

- Limited Codex access

Plus

$20/mo

- Codex access across app, CLI, and IDE

- GPT-5.4 and GPT-5.4-mini access

- Cloud task delegation

Pro

$200/mo

- Highest usage limits across all models

- Priority access to GPT-5.4 and GPT-5.3-Codex

- Full cloud automation features

- All surfaces: app, CLI, IDE, web

Business

$25–$30/user/mo

- Team policies and shared configurations

- Admin usage controls and analytics

- GitHub code review and Slack/Linear integrations

Enterprise

Contact Sales

- RBAC, SAML SSO, and SCIM provisioning

- Data residency and retention controls

- Compliance log export API

- No training on org data by default

- Enterprise key management (EKM)

API

Pay-per-token

- Direct API access to GPT-5.x Codex models

- Usage-based billing without subscription caps

- Full SDK and GitHub Action integration

Limitations to know

Bottom line

Choose Codex if your team needs cloud task delegation, async automation, and enterprise governance under a single OpenAI account. Skip it if you want snappy inline completions or if reliability consistency is non-negotiable for your workflow.