Fastest route from blank page to presentable deck

In our run, Gamma nailed design, content, export quality, and speed. The weak spot was follow-up prompt editing after generation.

Honest, tested reviews of the AI tools worth your time. We pick winners, flag the traps, and keep it to tools that actually matter.

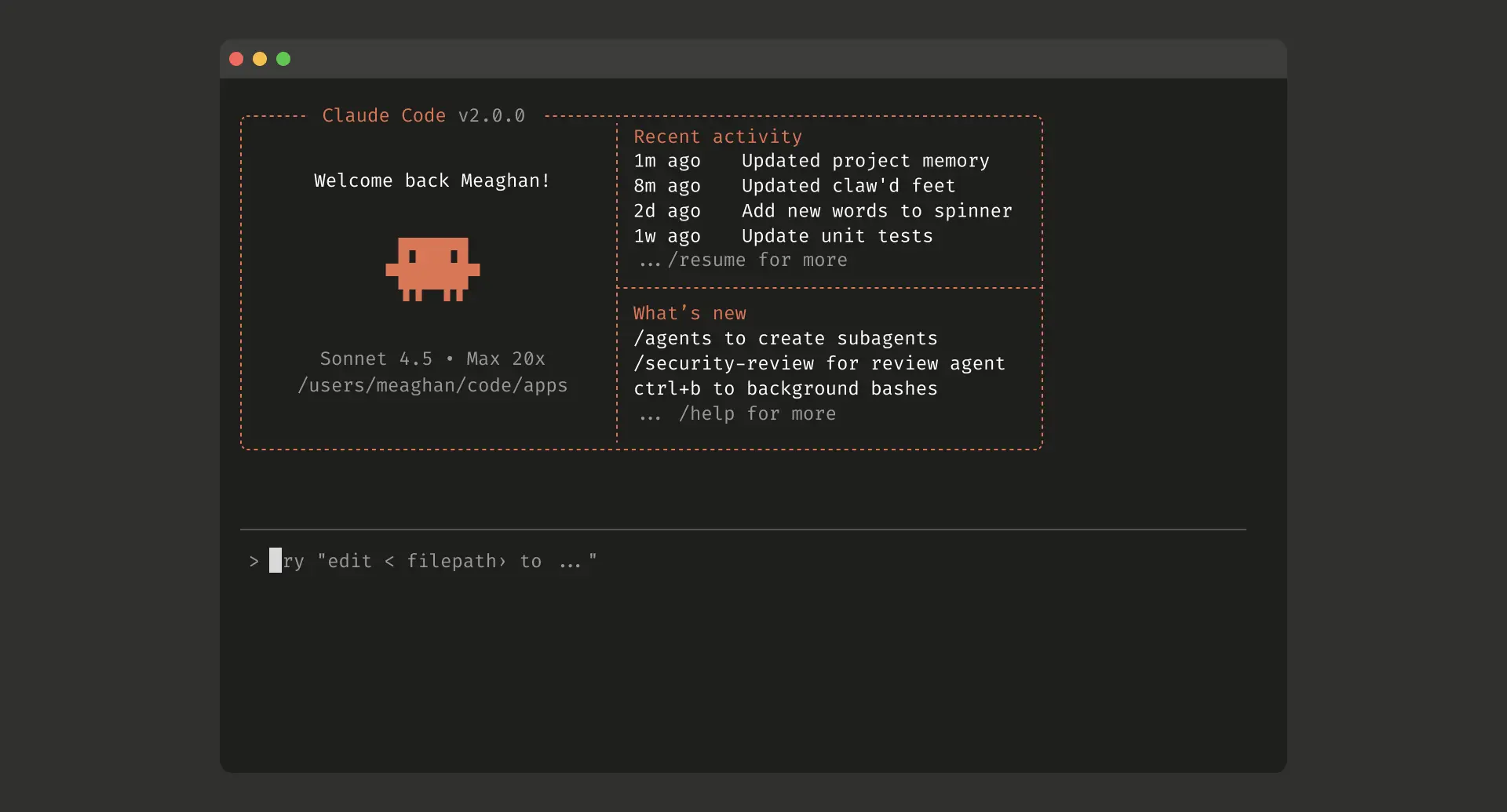

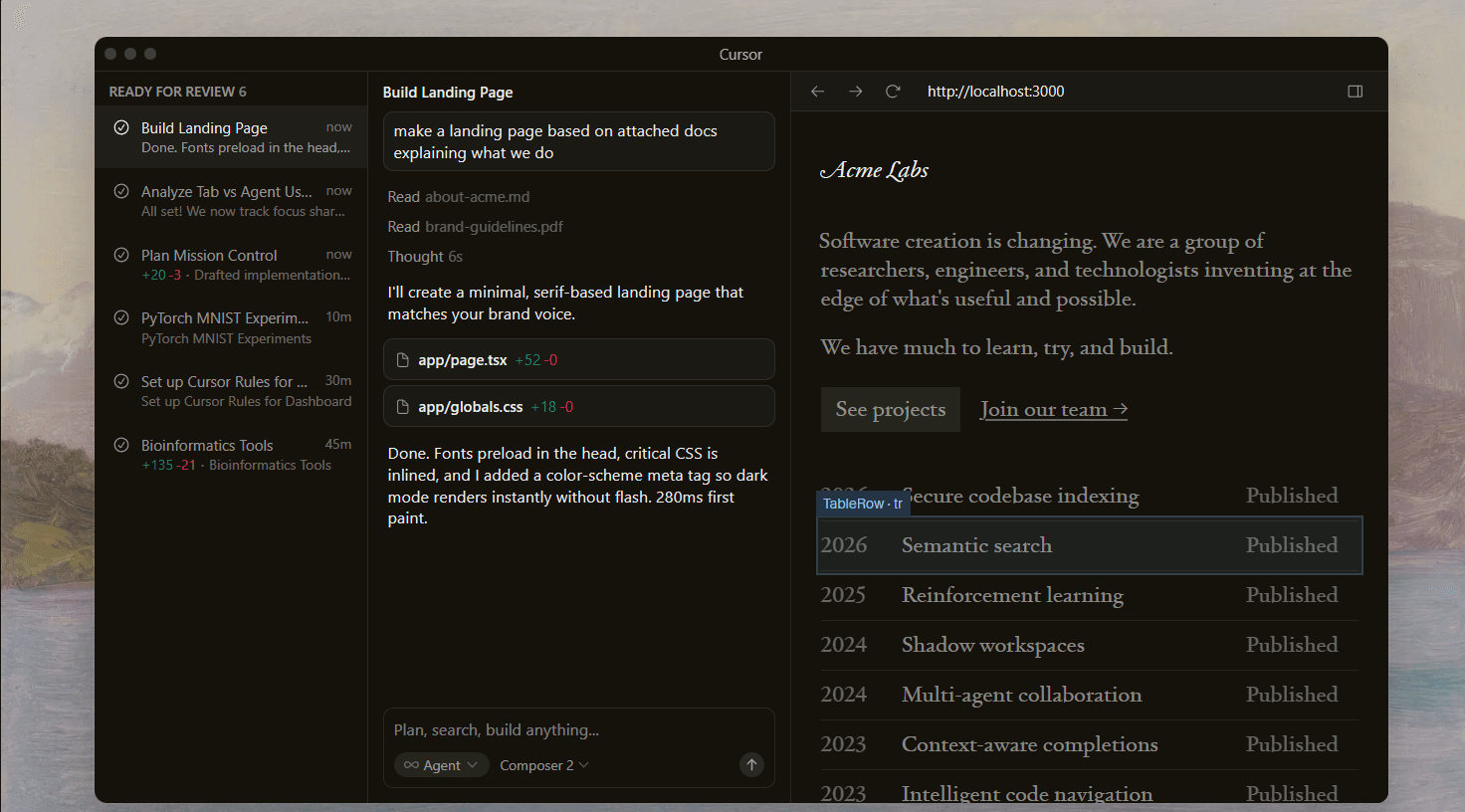

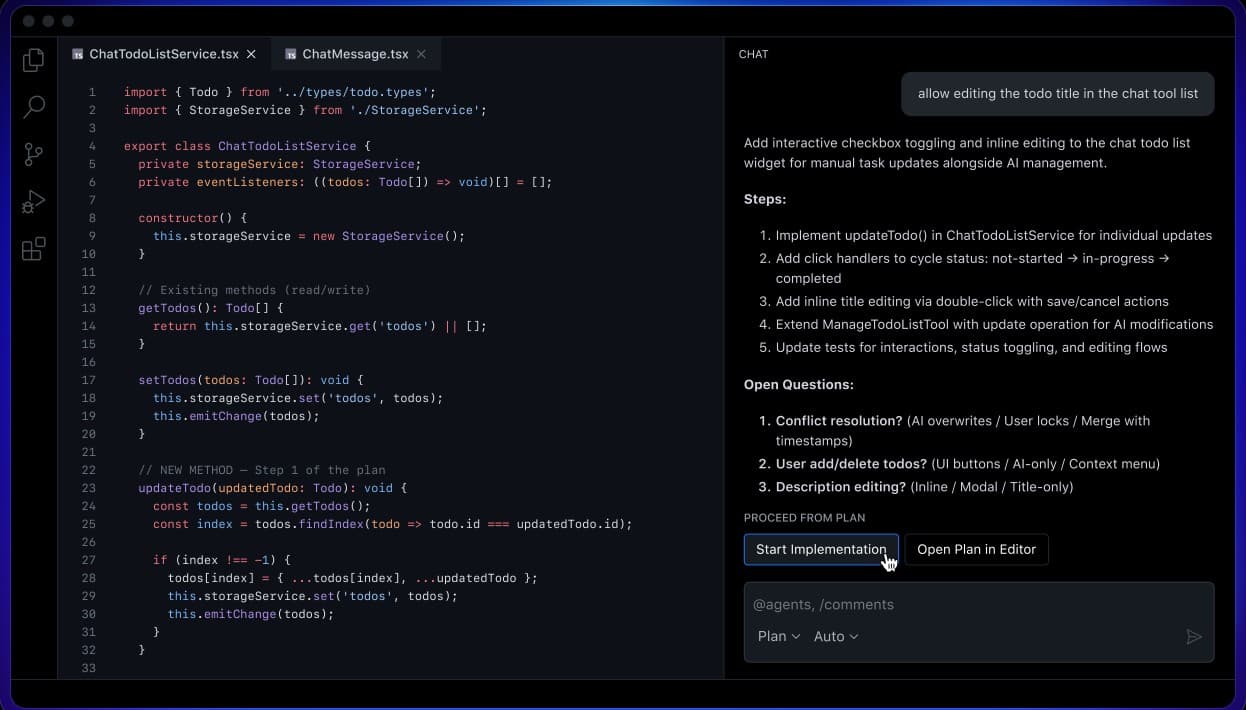

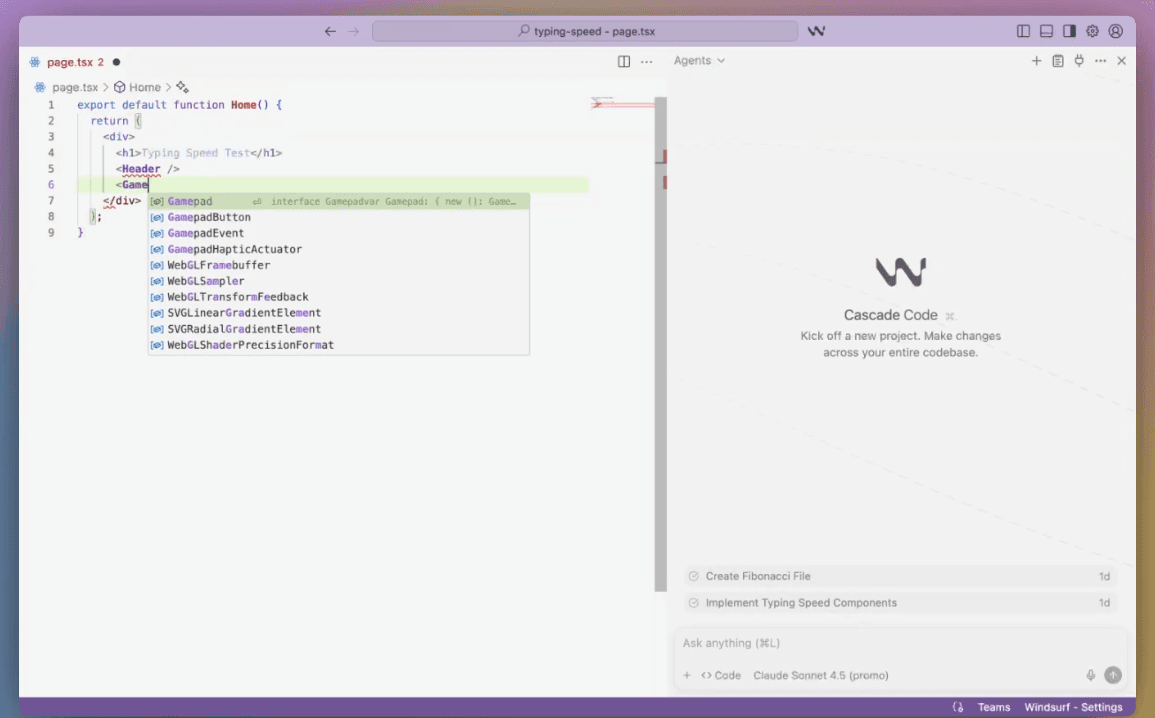

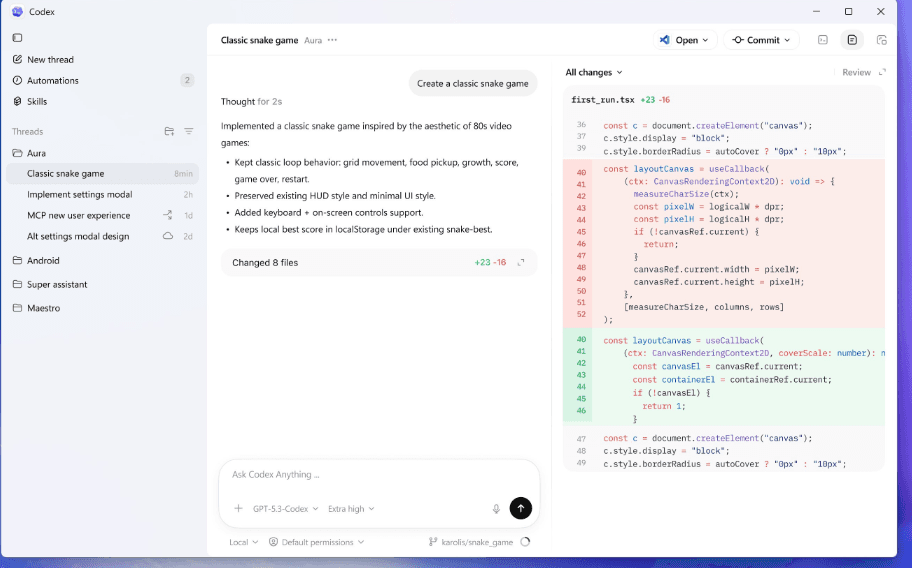

AI-powered coding assistants that can write, edit, debug, and refactor code. We tested each on real-world tasks: multi-file edits, bug fixes, greenfield projects, and legacy code navigation.

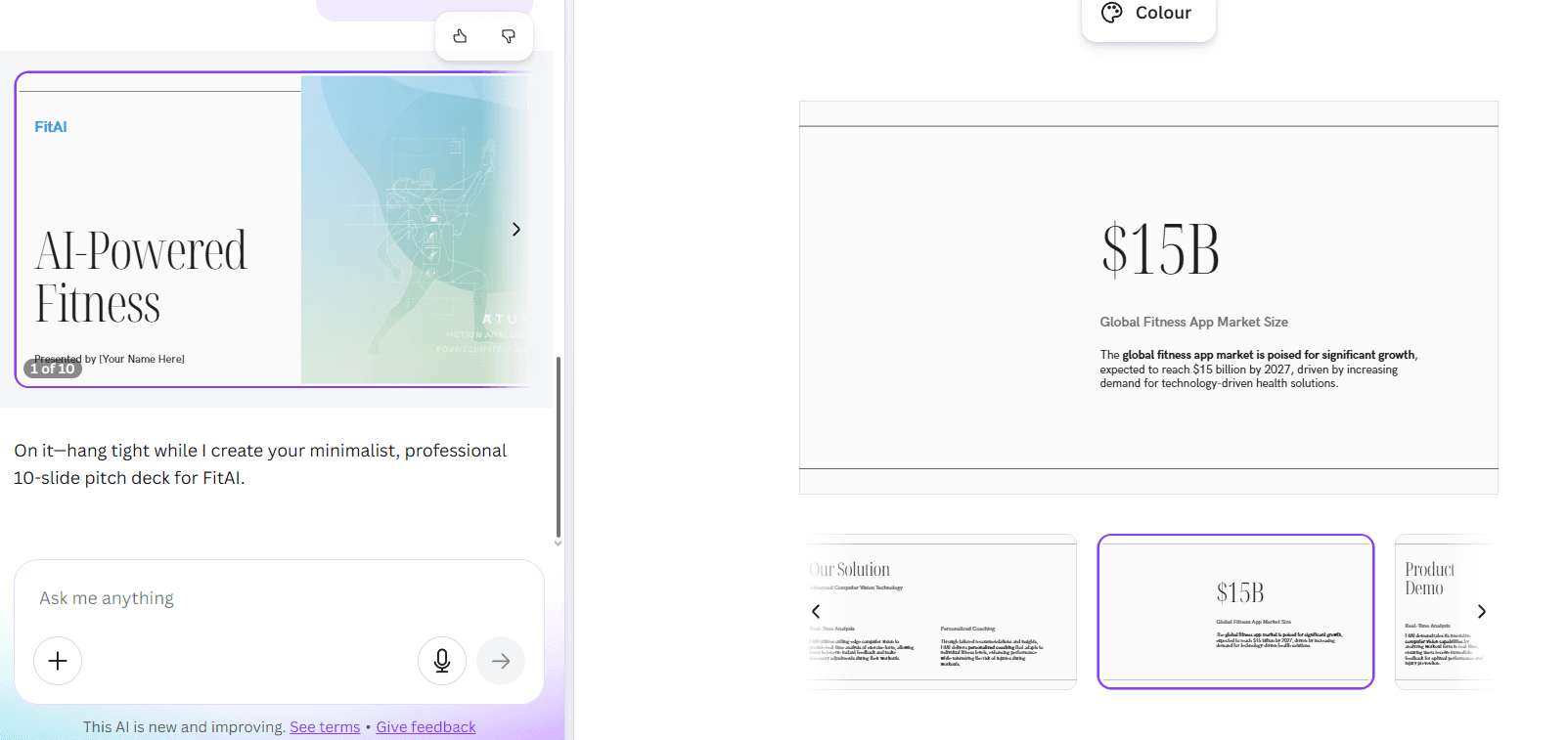

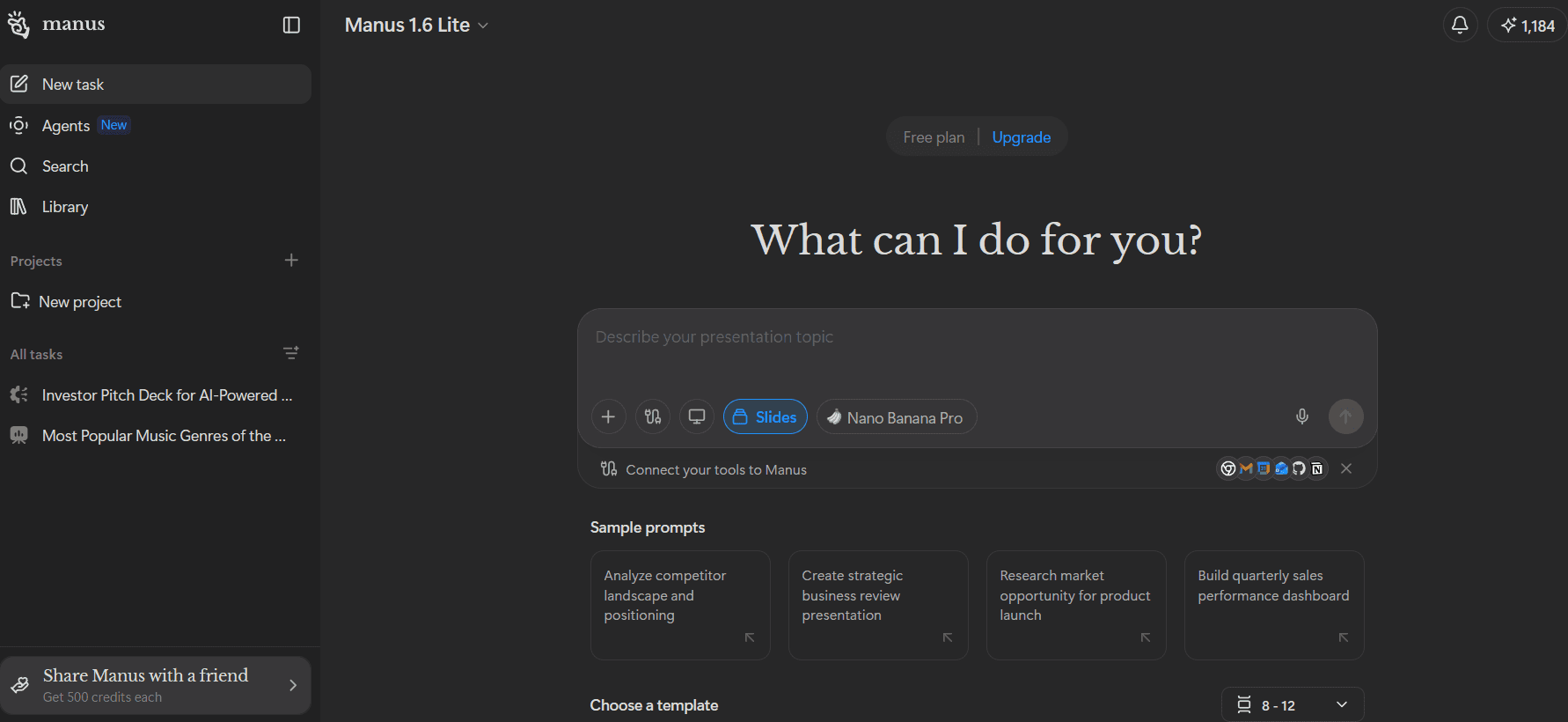

AI-powered tools that generate, design, and enhance slide decks from a prompt or document. We tested each on slide quality, design flexibility, and export capabilities.

In our run, Gamma nailed design, content, export quality, and speed. The weak spot was follow-up prompt editing after generation.

Beautiful.ai delivered one of the best-looking decks we tested, but follow-up prompt edits were almost nonfunctional.

In our testing, Plus AI was very fast and offered decent slide variety, but design quality, visuals, and prompt-based editing were too weak for high-stakes decks.

Canva AI gets you to a presentable first draft quickly, then hands the real work back to you in the editor.

Manus gave us excellent substance and speed, but we still had to manually elevate visual quality for client-facing work.

We ran two conflicting refactors simultaneously. One added async/await, one updated the error-handling layer. Neither agent knew the other existed. Both finished clean.

Cursor's tab completion is still the best in the category. The harder question is whether the usage bill stays predictable.

At $10/month with the widest IDE support in the market, Copilot covers 80% of what you need. The question is whether you need the other 20%.

Windsurf's compliance stack is the deepest in the category. The reliability and pricing story need to catch up.

One account, four surfaces, and async cloud tasks that run while you're not at your keyboard. The fire-and-forget model is real. The reliability variance is too.

01 — Research

Every tool starts with a structured sweep: official website, pricing page, documentation, changelog, and security or trust center. We follow that with third-party expert reviews and community sentiment from Reddit, Hacker News, and developer forums. Official marketing claims are explicitly cross-referenced against real-world experience.

02 — Scoring

We score each tool across dimensions that actually matter for what it does. Output quality, reliability, pricing transparency, privacy practices, and workflow fit are universal. From there, the rubric gets specific to the category. What counts as a dealbreaker for one type of tool is a non-issue for another. Every dimension gets a score of 1–5 and a one-line justification. Nothing averages itself into irrelevance.

03 — Editorial

We test the tools. We write the verdicts. Every review picks a winner, names the limitations outright, and flags hidden costs in pricing. Head-to-head comparisons focus on the dimensions that actually separate the two. No hedging. No paid placements. No category that ends without a clear recommendation.